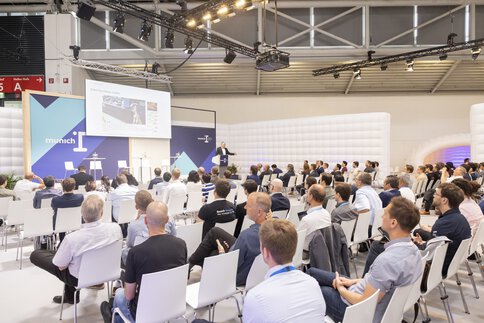

Hightech Summit

The high-tech platform for AI and robotics

The future of AI and robotics, the interaction between human and artificial intelligence, and responsible technological change.

Concrete applications for work, health, mobility, and environment, as well as ethical issues in the field of robotics and AI.

munich_i's collaborative software and hardware development competition for the global robotics community.

Under the theme of intelligence empowering tomorrow, the high-tech platform munich_i acts as a stage for the most acclaimed pioneers and their ideas. It is a place that facilitates knowledge transfer and collaboration – and provides great networking opportunities for innovation drivers from science, business, politics, and society. Because the complex challenges of the future can only be solved through joint initiatives and cooperation.

Hightech Summit

AI.Society

Robothon®

The expert panel at the munich_i Hightech Summit is both interdisciplinary and international. Visionaries from renowned companies and with various scientific backgrounds will present and discuss the latest developments.

Speaker 2025